CrossPoolMigrationv3: Difference between revisions

From Xen

Jump to navigationJump to search

Dave.scott (talk | contribs) No edit summary |

Dave.scott (talk | contribs) No edit summary |

||

| Line 1: | Line 1: | ||

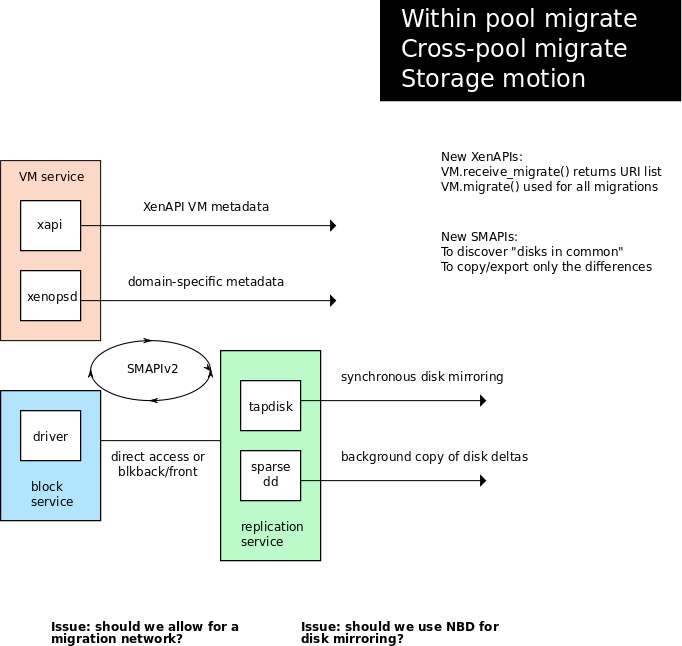

This page describes a possible design for Cross-pool migration (which also works for within-pool migration with and without shared storage). |

This page describes a possible design for Cross-pool migration (which also works for within-pool migration with and without shared storage). |

||

This design has the following features: |

|||

# disks are replicated between the two sites/SRs using a "replication service" which is aware of the underlying disk structure (e.g. in the case of .vhd it can use the sparseness information to speed up the copying) |

|||

# the mirror is made synchronous by using the *tapdisk* "mirror" plugin (the same as used by the existing disk caching feature) |

|||

# the pool-level VM metadata is export/imported by xapi |

|||

# the domain-level VM metadata is export/imported by xenopsd |

|||

See the following diagram: |

|||

[[File:components3.png]] |

[[File:components3.png]] |

||

This design has the following advantages: |

|||

# by separating the act of mirroring the disks (like a storage array would do) from the act of copying a running memory image, we don't need to hack libxenguest. There is a clean division of responsibility between managing storage and managing running VMs. |

|||

# by creating a synchronous mirror, we don't increase the migration blackout time |

|||

# we can re-use the disk replication service to do efficient cross-site backup/restore (ie to make periodic VM snapshot and archive use an incremental archive) |

|||

Revision as of 11:45, 3 January 2012

This page describes a possible design for Cross-pool migration (which also works for within-pool migration with and without shared storage).

This design has the following features:

- disks are replicated between the two sites/SRs using a "replication service" which is aware of the underlying disk structure (e.g. in the case of .vhd it can use the sparseness information to speed up the copying)

- the mirror is made synchronous by using the *tapdisk* "mirror" plugin (the same as used by the existing disk caching feature)

- the pool-level VM metadata is export/imported by xapi

- the domain-level VM metadata is export/imported by xenopsd

See the following diagram:

This design has the following advantages:

- by separating the act of mirroring the disks (like a storage array would do) from the act of copying a running memory image, we don't need to hack libxenguest. There is a clean division of responsibility between managing storage and managing running VMs.

- by creating a synchronous mirror, we don't increase the migration blackout time

- we can re-use the disk replication service to do efficient cross-site backup/restore (ie to make periodic VM snapshot and archive use an incremental archive)